I Didn't Buy a Mac Mini and Neither Should You

Andrej Simunaj

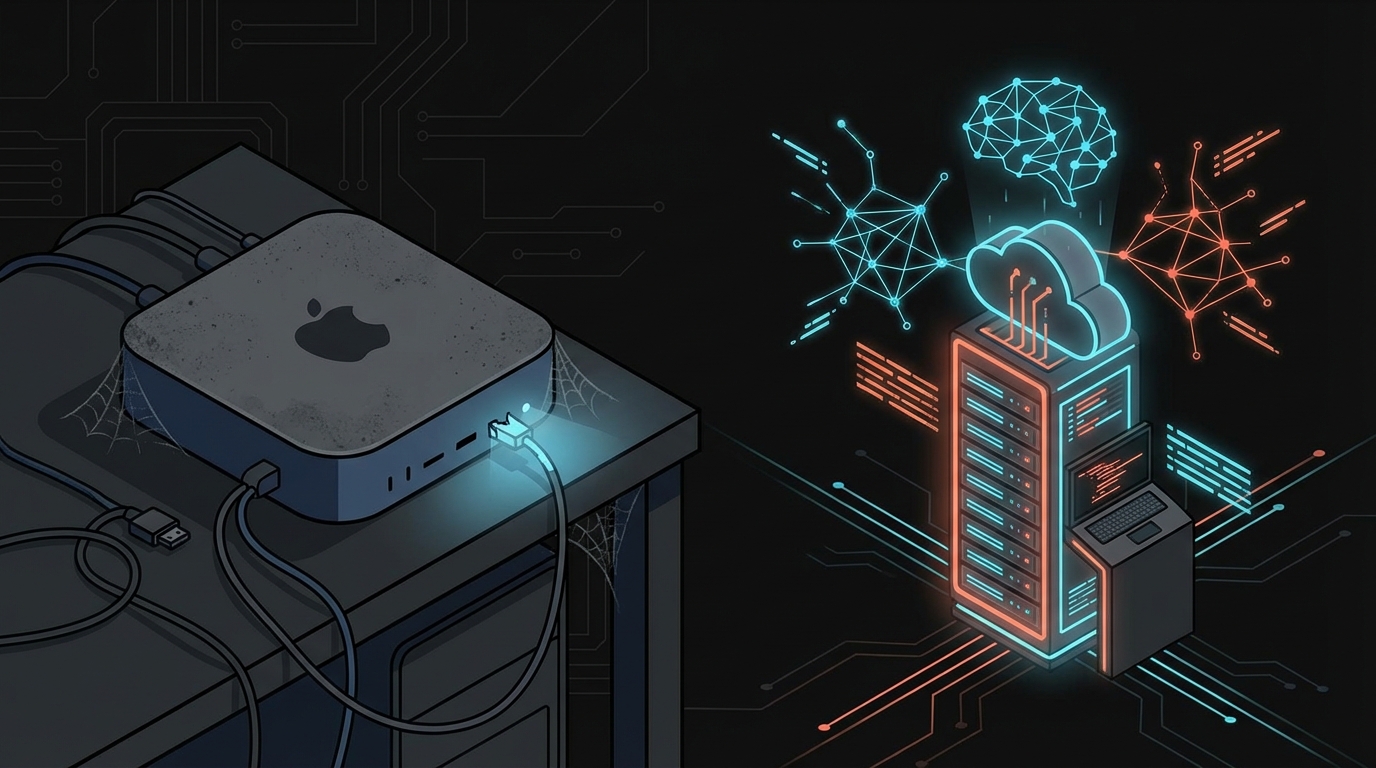

There’s been a frenzy. You’ve seen it. Developers, indie hackers, agency owners, all rushing to buy Mac Minis and Mac Studios to run local AI models. Stack them up, chain them together, run Ollama, download Gemma, maybe even squeeze out a 70B model if you max out the RAM.

I watched all of it. I didn’t buy one.

Not because I can’t afford it. Not because I don’t understand the appeal. I just couldn’t justify it at any given point. And I still can’t.

The Frenzy

It started with Llama, picked up with Mixtral, and went into overdrive when Apple Silicon showed it could run large language models with surprisingly decent performance. Suddenly everyone had a take. “Buy a Mac Mini M4 Pro with 64GB, run your own models, no API costs, data stays local, you’re free.”

The YouTube videos piled up. The Reddit threads multiplied. People were buying $2,199 machines to avoid paying $20/mo API bills.

And look, I get it. The idea of owning your own AI, running on your desk, no dependencies, no vendor lock-in, it’s appealing. It scratches the same itch as self-hosting your own email server. Sounds great in theory.

In practice? Most people who self-host email eventually go back to Gmail.

The Math

Let’s actually do the math, because this is where it gets interesting.

The Mac Mini setup (serious local AI):

| Item | Cost |

|---|---|

| Mac Mini M4 Pro, 64GB | $2,199 |

| Electricity (~$10/mo for 3 years) | $360 |

| Total over 3 years | ~$2,559 |

That gets you a machine that can run 30B-70B parameter open-source models locally. No monthly fees after that. Sounds like a deal, right?

My setup (VPS + frontier models):

| Item | Cost |

|---|---|

| Hetzner VPS, 64GB RAM (~$30/mo) | $1,080 |

| Claude Max subscription ($200/mo) | $7,200 |

| Total over 3 years | ~$8,280 |

So yes. On paper, the Mac Mini is roughly 3x cheaper over three years. There it is. I’m not going to pretend the math favors the cloud setup in raw dollars. It doesn’t.

But here’s the thing. That comparison is fundamentally broken. And not just because of performance.

Most people who buy Mac Minis are still paying for Claude or GPT subscriptions anyway. They’re running local models for some tasks and frontier models for anything that actually matters. So the real math for most Mac Mini buyers looks more like this:

| Item | Cost |

|---|---|

| Mac Mini M4 Pro, 64GB | $2,199 |

| Electricity (~$10/mo for 3 years) | $360 |

| Claude/GPT subscription (because local isn’t enough) | $7,200 |

| Total over 3 years | ~$9,759 |

Suddenly the Mac Mini setup is MORE expensive than mine. You paid $2,199 for a machine that you still need to supplement with frontier APIs. To compare apples to apples, the real question is: what does a VPS alone cost over the same period? A $30/mo Hetzner VPS for six years is $2,160. That’s roughly the same as the Mac Mini, except my VPS is in a datacenter with 99.9% uptime, accessible from anywhere, and I’m not maintaining hardware.

The Reality

You’re not comparing the same thing. You’re comparing a Toyota Corolla to a Formula 1 car and saying the Corolla wins because it costs less.

The Mac Mini runs open-source models. The best of them, like GLM-5.1, score around 77.8% on SWE-bench Verified. That’s genuinely impressive for open source. But Claude Opus 4.6 scores 80.8%. And Claude Mythos Preview? 93.9%.

But benchmarks don’t tell the real story. I’ve been using AI to write 100% of my code for over two years. I’ve tried local models. I’ve tried open-source models via API. And every single time I go back to a frontier model, the difference is night and day. It’s not 5% better. It’s a completely different experience.

Local models are great at autocomplete. They can fill in functions, generate boilerplate, maybe handle simple refactoring. But ask them to architect a system, debug a complex integration, or reason through a multi-step deployment pipeline? They fall apart. Or they get you 80% there and the last 20% costs you three hours of debugging that a frontier model would have gotten right the first time.

Those three hours you just lost? That’s your “savings” gone.

I run a product studio. I build and operate TranscriptAPI, Recapio, Cascady, and CRHQ. Every hour I save is an hour I can spend shipping features, talking to customers, or thinking about strategy. At my billing rate, the $200/mo Claude subscription pays for itself in the first week of every month.

What Actually Matters

Here’s what nobody talks about when they’re showing off their Mac Mini cluster on Twitter.

1. Access from anywhere

My VPS runs 24/7 in a datacenter. I can connect to it from my laptop, my phone, a hotel lobby in Tokyo, a coffee shop in Zagreb. My AI agents are always running, always available.

A Mac Mini at home? That’s great until your ISP goes down. Or you’re traveling. Or your power flickers. Or you want to work from somewhere that isn’t your desk.

I built CRHQ specifically around this architecture. AI agents running on cloud infrastructure, accessible from anywhere, delegating work to each other autonomously. That only works if your infrastructure is always on, always reachable. A Mac Mini under your desk doesn’t cut it.

2. Real uptime

Hetzner gives me 99.9%+ SLA. My VPS has been up for months at a time without a hiccup. Meanwhile, everyone I know who runs a Mac Mini “server” has stories. The cat unplugged it. macOS decided to update at 3am. The SSD filled up. The fan got loud so they moved it to the closet and now it overheats.

A datacenter is built for uptime. Your home office is not.

3. No hardware depreciation

That $2,199 Mac Mini M4 Pro you just bought? The M5 is coming later this year. Then M6. Then M7. Every generation makes your hardware less competitive. In three years, you’re running last-generation silicon while frontier models have leaped two or three generations ahead.

My VPS? I cancel it and spin up a new one with better specs. Takes five minutes. No hardware to sell on eBay.

4. Scalability

Need more power? I upgrade my VPS plan. Need less? I downgrade. Need a second server for a specific project? I spin one up. Need 10 servers for a load test? Done.

With a Mac Mini, you’re stuck with what you bought. Need more RAM? Buy another machine. Need more storage? Buy another machine. Need more compute? Buy. Another. Machine.

5. Redundancy and backups

I take automated snapshots of my VPS. I have offsite backups. If my server dies, I restore from a snapshot and I’m back in 20 minutes.

Your Mac Mini’s SSD dies? I hope you had Time Machine set up. And I hope the backup drive didn’t fail too.

My Setup

I’m not theorizing. This is how I actually work, every single day.

I run multiple VPS instances on Hetzner. My entire operation, every product, every AI agent, every deployment, runs on cloud infrastructure. CRHQ, the AI agent platform I built, is literally designed around this concept: agents running on remote servers, coordinating work, deploying code, managing infrastructure, all without me needing to be in any specific location.

My Claude subscription costs me $200/mo. Sometimes I cycle through multiple accounts because I hit the limits, so call it $400-600/mo in heavy months. That sounds expensive until you realize these agents are writing production code, managing deployments, creating marketing content, analyzing data, and doing it at a level that no local model can touch.

The total cost of my cloud infrastructure, including all VPS instances, API subscriptions, and services? Maybe $500-800/mo. Over three years, that’s $18,000-$28,800.

Compare that to stacking Mac Minis: you’d need multiple machines ($6,000-$10,000), networking equipment, backup solutions, a UPS for power outages, and you’d STILL be running inferior models. Oh, and you’d need to be home to fix things when they break.

When Local Does Make Sense

I want to be fair. There ARE legitimate reasons to run local:

Regulated industries. If you’re in healthcare, finance, or defense and your compliance team says data cannot leave your premises, then yes, local it is. But that’s not most of us. That’s not the indie hacker buying a Mac Mini because a YouTuber told them to.

Extreme privacy requirements. If you’re processing genuinely sensitive data and you can’t risk any third-party exposure, even with enterprise API agreements, local inference makes sense. But again, this applies to maybe 5% of the people buying Mac Minis right now.

Offline environments. If you’re building something that needs to work without internet. Edge devices, field operations, air-gapped systems. Local models are the only option. Totally valid.

Experimentation and learning. Want to understand how models work? Fine-tune something? Play with quantization? A Mac Mini is a great learning tool. Just don’t confuse a hobby with a production strategy.

For everyone else? For the small business owner, the marketing agency, the 10-person startup, the solo developer, the freelancer? Cloud wins. It’s not even close.

And here’s the kicker: if open-source models ever do reach frontier-level performance, nothing prevents me from deploying them on a VPS. I can spin up a high-RAM cloud instance, install Ollama, and run whatever model I want. I know Apple Silicon has some optimization advantages for local inference, but I’d rather have the flexibility of cloud infrastructure than be locked into hardware I bought two years ago. The VPS approach keeps every door open.

The Security Argument

“But what about security? I don’t want my data on someone else’s server!”

I hear this a lot. And I understand the instinct. But let me ask you something.

Your email is on Google’s servers. Your code is on GitHub’s servers. Your files are on Dropbox or iCloud. Your payments go through Stripe. Your customer data lives in a database hosted by AWS or DigitalOcean or Hetzner.

You’ve already decided to trust the cloud with everything that matters. Why is AI inference the line you won’t cross?

A properly hardened VPS with SSH key authentication, encrypted disks, firewall rules, and regular security audits is more secure than a Mac Mini sitting on your home network behind a consumer-grade router with a default password.

If your concern is that AI providers will train on your data, read the enterprise agreements. Claude’s API doesn’t train on your inputs. Neither does GPT’s enterprise tier. This is a solved problem.

The Bottom Line

The Mac Mini frenzy is driven by the same impulse that makes people buy home gym equipment in January. It feels productive. It feels like you’re taking control. But six months later, the equipment is collecting dust and you’re still not going to the gym.

Ship products. Don’t collect hardware.

The goal was never to run a model. The goal is to build things, solve problems, make money, create value. The model is a tool. And the best tool for the job right now, and for the foreseeable future, is a frontier model running in the cloud, accessible from anywhere, backed by real infrastructure.

I didn’t buy a Mac Mini. I bought a $25/mo VPS and a $200/mo Claude subscription. And with that, I run an entire product studio.

You probably should too.

Andrej Simunaj is the founder of Zero Point Studio, an AI product studio and engineering agency building TranscriptAPI, Recapio, Cascady, and CRHQ.